Image recognition with LLM

We compared Large Language Models against traditional machine learning for detecting electrical fuse states in images. Here is what we found about accuracy, cost, and when each approach makes sense.

Recognising the growing use of Large Language Models in image recognition, we decided to investigate their applicability to a real project task. This post covers the business perspective — for the technical implementation details, see Image recognition with LLM: A dev's how-to.

The Problem

Our task was to create a model that could determine whether electrical fuses were installed or not installed within electrical boxes.

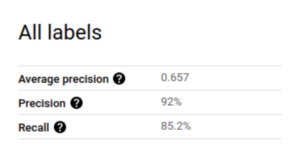

For the initial machine learning approach, we used Google Vision service trained on a substantial customer-provided dataset hosted in cloud infrastructure.

Testing LLMs for Image Recognition

When we turned to LLMs, we found that relatively few major models support image analysis. We evaluated the available options using two distinct approaches:

Zero-Shot Learning

We presented the model with images and a description of the task along with the desired output format — no examples provided. This is the simplest and cheapest approach.

Few-Shot Learning

We provided several example images with correct answers before presenting the new task. This consistently produced better results across all models, though at higher token costs due to the additional images in the prompt.

Results

| Model | Zero-shot Cost | Zero-shot Precision | Few-shot Cost | Few-shot Precision |

|---|---|---|---|---|

| gpt-4o | $0.0135 | 58% | $0.04 | 85% |

| gpt-4o-mini | $0.0008 | 21% | $0.0024 | 58% |

| gemini-2.0-flash-lite-preview-02-05 | $0.0002 | 21% | $0.0006 | 75% |

Traditional machine learning achieved superior accuracy, but it required a substantial upfront investment of roughly €2,000 in development effort alone.

When Does Each Approach Make Sense?

The right choice depends on your specific situation:

Use traditional ML when:

- You need maximum accuracy

- You have a large labeled dataset available

- The model will be used frequently at scale

- Latency is critical (LLMs are significantly slower at inference)

Use LLMs when:

- Usage is infrequent — at around 1,000 runs per year, gpt-4o few-shot costs approximately €400 vs. ~€2,000 for custom ML development

- You need a quick prototype without dataset collection

- You want to avoid upfront development investment

Key considerations:

- Data privacy: Self-hosting vs. sending data to external APIs like OpenAI or Google

- Response speed: LLMs have significantly higher latency than a locally deployed traditional model

- Model selection: For this task,

gemini-2.0-flash-liteoffered the best pricing-to-performance ratio among the models we tested

Conclusion

LLMs are a genuinely viable option for image recognition tasks, particularly when usage volume is low and avoiding upfront development cost matters. The few-shot approach with gpt-4o reached 85% precision — close enough to traditional ML for many real-world applications. The optimal choice depends on your project's usage frequency, latency tolerance, data privacy requirements, and budget constraints.